openpose を試す

openpose を試しましょう。

インストール

openpose/installation.md at master · CMU-Perceptual-Computing-Lab/openpose · GitHub

依存

いろいろありますが、構築が面倒な CUDA 8 と cuDNN 5.1 が、私の環境では、ちょうど

$ nvcc --version nvcc: NVIDIA (R) Cuda compiler driver Copyright (c) 2005-2016 NVIDIA Corporation Built on Tue_Jan_10_13:22:03_CST_2017 Cuda compilation tools, release 8.0, V8.0.61 $ ls /usr/local/cuda/lib64/libcudnn.so.* /usr/local/cuda/lib64/libcudnn.so.5 /usr/local/cuda/lib64/libcudnn.so.5.1.10

ということで、満たしておりました。

その他も、 apt-get からインストールできました。

コマンドラインからコンパイル

openpose/installation.md at master · CMU-Perceptual-Computing-Lab/openpose · GitHub

テスト

まずは、サンプルを使いましょう。

処理が軽そうな画像を。

~/openpose$ ./build/examples/openpose/openpose.bin --image_dir examples/media/ Starting pose estimation demo. Auto-detecting all available GPUs... Detected 1 GPU(s), using 1 of them starting at GPU 0. Starting thread(s) Real-time pose estimation demo successfully finished. Total time: 9.159081 seconds. HDF5: infinite loop closing library D,T,F,FD,P,FD,P,FD,P,E,E,E,E,E,E,E,E,E,E,E,E,E,E,E,E,E,E,E,E,E,E,E,E,E,E,E,E,E,E,E,E,E,E,E,E,E,E,E,E,E,E,E,E,E,E,E,E,E,E,E,E,E,E,E,E,E,E,E,E,E,E,E,E,E,E,E,E,E,E,E,E,E,E,E,E,E,E,E,E,E,E,E,E,E,E,E,E,E,E,E,E,E,E,E,E

加工後の画像が表示されて、消えていきます。

オプションの確認

$ ./openpose/build/examples/openpose/openpose.bin --help

openpose.bin: Warning: SetUsageMessage() never called

Flags from /home/kiyo/openpose/examples/openpose/openpose.cpp:

-3d (Running OpenPose 3-D reconstruction demo: 1) Reading from a stereo

camera system. 2) Performing 3-D reconstruction from the multiple views.

3) Displaying 3-D reconstruction results. Note that it will only display

1 person. If multiple people is present, it will fail.) type: bool

default: false

-3d_min_views (Minimum number of views required to reconstruct each

keypoint. By default (-1), it will require all the cameras to see the

keypoint in order to reconstruct it.) type: int32 default: -1

-3d_views (Complementary option to `--image_dir` or `--video`. OpenPose

will read as many images per iteration, allowing tasks such as stereo

camera processing (`--3d`). Note that `--camera_parameters_folder` must

be set. OpenPose must find as many `xml` files in the parameter folder as

this number indicates.) type: int32 default: 1

-alpha_heatmap (Blending factor (range 0-1) between heatmap and original

frame. 1 will only show the heatmap, 0 will only show the frame. Only

valid for GPU rendering.) type: double default: 0.69999999999999996

-alpha_pose (Blending factor (range 0-1) for the body part rendering. 1

will show it completely, 0 will hide it. Only valid for GPU rendering.)

type: double default: 0.59999999999999998

-body_disable (Disable body keypoint detection. Option only possible for

faster (but less accurate) face keypoint detection.) type: bool

default: false

-camera (The camera index for cv::VideoCapture. Integer in the range [0,

9]. Select a negative number (by default), to auto-detect and open the

first available camera.) type: int32 default: -1

-camera_fps (Frame rate for the webcam (also used when saving video). Set

this value to the minimum value between the OpenPose displayed speed and

the webcam real frame rate.) type: double default: 30

-camera_parameter_folder (String with the folder where the camera

parameters are located.) type: string

default: "models/cameraParameters/flir/"

-camera_resolution (Set the camera resolution (either `--camera` or

`--flir_camera`). `-1x-1` will use the default 1280x720 for `--camera`,

or the maximum flir camera resolution available for `--flir_camera`)

type: string default: "-1x-1"

-disable_blending (If enabled, it will render the results (keypoint

skeletons or heatmaps) on a black background, instead of being rendered

into the original image. Related: `part_to_show`, `alpha_pose`, and

`alpha_pose`.) type: bool default: false

-disable_multi_thread (It would slightly reduce the frame rate in order to

highly reduce the lag. Mainly useful for 1) Cases where it is needed a

low latency (e.g. webcam in real-time scenarios with low-range GPU

devices); and 2) Debugging OpenPose when it is crashing to locate the

error.) type: bool default: false

-display (Display mode: -1 for automatic selection; 0 for no display

(useful if there is no X server and/or to slightly speed up the

processing if visual output is not required); 2 for 2-D display; 3 for

3-D display (if `--3d` enabled); and 1 for both 2-D and 3-D display.)

type: int32 default: -1

-face (Enables face keypoint detection. It will share some parameters from

the body pose, e.g. `model_folder`. Note that this will considerable slow

down the performance and increse the required GPU memory. In addition,

the greater number of people on the image, the slower OpenPose will be.)

type: bool default: false

-face_alpha_heatmap (Analogous to `alpha_heatmap` but applied to face.)

type: double default: 0.69999999999999996

-face_alpha_pose (Analogous to `alpha_pose` but applied to face.)

type: double default: 0.59999999999999998

-face_net_resolution (Multiples of 16 and squared. Analogous to

`net_resolution` but applied to the face keypoint detector. 320x320

usually works fine while giving a substantial speed up when multiple

faces on the image.) type: string default: "368x368"

-face_render (Analogous to `render_pose` but applied to the face. Extra

option: -1 to use the same configuration that `render_pose` is using.)

type: int32 default: -1

-face_render_threshold (Analogous to `render_threshold`, but applied to the

face keypoints.) type: double default: 0.40000000000000002

-flir_camera (Whether to use FLIR (Point-Grey) stereo camera.) type: bool

default: false

-frame_first (Start on desired frame number. Indexes are 0-based, i.e. the

first frame has index 0.) type: uint64 default: 0

-frame_flip (Flip/mirror each frame (e.g. for real time webcam

demonstrations).) type: bool default: false

-frame_last (Finish on desired frame number. Select -1 to disable. Indexes

are 0-based, e.g. if set to 10, it will process 11 frames (0-10).)

type: uint64 default: 18446744073709551615

-frame_rotate (Rotate each frame, 4 possible values: 0, 90, 180, 270.)

type: int32 default: 0

-frames_repeat (Repeat frames when finished.) type: bool default: false

-fullscreen (Run in full-screen mode (press f during runtime to toggle).)

type: bool default: false

-hand (Enables hand keypoint detection. It will share some parameters from

the body pose, e.g. `model_folder`. Analogously to `--face`, it will also

slow down the performance, increase the required GPU memory and its speed

depends on the number of people.) type: bool default: false

-hand_alpha_heatmap (Analogous to `alpha_heatmap` but applied to hand.)

type: double default: 0.69999999999999996

-hand_alpha_pose (Analogous to `alpha_pose` but applied to hand.)

type: double default: 0.59999999999999998

-hand_net_resolution (Multiples of 16 and squared. Analogous to

`net_resolution` but applied to the hand keypoint detector.) type: string

default: "368x368"

-hand_render (Analogous to `render_pose` but applied to the hand. Extra

option: -1 to use the same configuration that `render_pose` is using.)

type: int32 default: -1

-hand_render_threshold (Analogous to `render_threshold`, but applied to the

hand keypoints.) type: double default: 0.20000000000000001

-hand_scale_number (Analogous to `scale_number` but applied to the hand

keypoint detector. Our best results were found with `hand_scale_number` =

6 and `hand_scale_range` = 0.4.) type: int32 default: 1

-hand_scale_range (Analogous purpose than `scale_gap` but applied to the

hand keypoint detector. Total range between smallest and biggest scale.

The scales will be centered in ratio 1. E.g. if scaleRange = 0.4 and

scalesNumber = 2, then there will be 2 scales, 0.8 and 1.2.) type: double

default: 0.40000000000000002

-hand_tracking (Adding hand tracking might improve hand keypoints detection

for webcam (if the frame rate is high enough, i.e. >7 FPS per GPU) and

video. This is not person ID tracking, it simply looks for hands in

positions at which hands were located in previous frames, but it does not

guarantee the same person ID among frames.) type: bool default: false

-heatmaps_add_PAFs (Same functionality as `add_heatmaps_parts`, but adding

the PAFs.) type: bool default: false

-heatmaps_add_bkg (Same functionality as `add_heatmaps_parts`, but adding

the heatmap corresponding to background.) type: bool default: false

-heatmaps_add_parts (If true, it will fill op::Datum::poseHeatMaps array

with the body part heatmaps, and analogously face & hand heatmaps to

op::Datum::faceHeatMaps & op::Datum::handHeatMaps. If more than one

`add_heatmaps_X` flag is enabled, it will place then in sequential memory

order: body parts + bkg + PAFs. It will follow the order on

POSE_BODY_PART_MAPPING in `src/openpose/pose/poseParameters.cpp`. Program

speed will considerably decrease. Not required for OpenPose, enable it

only if you intend to explicitly use this information later.) type: bool

default: false

-heatmaps_scale (Set 0 to scale op::Datum::poseHeatMaps in the range

[-1,1], 1 for [0,1]; 2 for integer rounded [0,255]; and 3 for no

scaling.) type: int32 default: 2

-identification (Whether to enable people identification across frames. Not

available yet, coming soon.) type: bool default: false

-image_dir (Process a directory of images. Use `examples/media/` for our

default example folder with 20 images. Read all standard formats (jpg,

png, bmp, etc.).) type: string default: ""

-ip_camera (String with the IP camera URL. It supports protocols like RTSP

and HTTP.) type: string default: ""

-keypoint_scale (Scaling of the (x,y) coordinates of the final pose data

array, i.e. the scale of the (x,y) coordinates that will be saved with

the `write_keypoint` & `write_keypoint_json` flags. Select `0` to scale

it to the original source resolution, `1`to scale it to the net output

size (set with `net_resolution`), `2` to scale it to the final output

size (set with `resolution`), `3` to scale it in the range [0,1], and 4

for range [-1,1]. Non related with `scale_number` and `scale_gap`.)

type: int32 default: 0

-logging_level (The logging level. Integer in the range [0, 255]. 0 will

output any log() message, while 255 will not output any. Current OpenPose

library messages are in the range 0-4: 1 for low priority messages and 4

for important ones.) type: int32 default: 3

-model_folder (Folder path (absolute or relative) where the models (pose,

face, ...) are located.) type: string default: "models/"

-model_pose (Model to be used. E.g. `COCO` (18 keypoints), `MPI` (15

keypoints, ~10% faster), `MPI_4_layers` (15 keypoints, even faster but

less accurate).) type: string default: "COCO"

-net_resolution (Multiples of 16. If it is increased, the accuracy

potentially increases. If it is decreased, the speed increases. For

maximum speed-accuracy balance, it should keep the closest aspect ratio

possible to the images or videos to be processed. Using `-1` in any of

the dimensions, OP will choose the optimal aspect ratio depending on the

user's input value. E.g. the default `-1x368` is equivalent to `656x368`

in 16:9 resolutions, e.g. full HD (1980x1080) and HD (1280x720)

resolutions.) type: string default: "-1x368"

-no_gui_verbose (Do not write text on output images on GUI (e.g. number of

current frame and people). It does not affect the pose rendering.)

type: bool default: false

-num_gpu (The number of GPU devices to use. If negative, it will use all

the available GPUs in your machine.) type: int32 default: -1

-num_gpu_start (GPU device start number.) type: int32 default: 0

-number_people_max (This parameter will limit the maximum number of people

detected, by keeping the people with top scores. The score is based in

person area over the image, body part score, as well as joint score

(between each pair of connected body parts). Useful if you know the exact

number of people in the scene, so it can remove false positives (if all

the people have been detected. However, it might also include false

negatives by removing very small or highly occluded people. -1 will keep

them all.) type: int32 default: -1

-output_resolution (The image resolution (display and output). Use "-1x-1"

to force the program to use the input image resolution.) type: string

default: "-1x-1"

-part_candidates (Also enable `write_json` in order to save this

information. If true, it will fill the op::Datum::poseCandidates array

with the body part candidates. Candidates refer to all the detected body

parts, before being assembled into people. Note that the number of

candidates is equal or higher than the number of final body parts (i.e.

after being assembled into people). The empty body parts are filled with

0s. Program speed will slightly decrease. Not required for OpenPose,

enable it only if you intend to explicitly use this information.)

type: bool default: false

-part_to_show (Prediction channel to visualize (default: 0). 0 for all the

body parts, 1-18 for each body part heat map, 19 for the background heat

map, 20 for all the body part heat maps together, 21 for all the PAFs,

22-40 for each body part pair PAF.) type: int32 default: 0

-process_real_time (Enable to keep the original source frame rate (e.g. for

video). If the processing time is too long, it will skip frames. If it is

too fast, it will slow it down.) type: bool default: false

-profile_speed (If PROFILER_ENABLED was set in CMake or Makefile.config

files, OpenPose will show some runtime statistics at this frame number.)

type: int32 default: 1000

-render_pose (Set to 0 for no rendering, 1 for CPU rendering (slightly

faster), and 2 for GPU rendering (slower but greater functionality, e.g.

`alpha_X` flags). If -1, it will pick CPU if CPU_ONLY is enabled, or GPU

if CUDA is enabled. If rendering is enabled, it will render both

`outputData` and `cvOutputData` with the original image and desired body

part to be shown (i.e. keypoints, heat maps or PAFs).) type: int32

default: -1

-render_threshold (Only estimated keypoints whose score confidences are

higher than this threshold will be rendered. Generally, a high threshold

(> 0.5) will only render very clear body parts; while small thresholds

(~0.1) will also output guessed and occluded keypoints, but also more

false positives (i.e. wrong detections).) type: double

default: 0.050000000000000003

-scale_gap (Scale gap between scales. No effect unless scale_number > 1.

Initial scale is always 1. If you want to change the initial scale, you

actually want to multiply the `net_resolution` by your desired initial

scale.) type: double default: 0.29999999999999999

-scale_number (Number of scales to average.) type: int32 default: 1

-video (Use a video file instead of the camera. Use

`examples/media/video.avi` for our default example video.) type: string

default: ""

-write_coco_json (Full file path to write people pose data with JSON COCO

validation format.) type: string default: ""

-write_heatmaps (Directory to write body pose heatmaps in PNG format. At

least 1 `add_heatmaps_X` flag must be enabled.) type: string default: ""

-write_heatmaps_format (File extension and format for `write_heatmaps`,

analogous to `write_images_format`. For lossless compression, recommended

`png` for integer `heatmaps_scale` and `float` for floating values.)

type: string default: "png"

-write_images (Directory to write rendered frames in `write_images_format`

image format.) type: string default: ""

-write_images_format (File extension and format for `write_images`, e.g.

png, jpg or bmp. Check the OpenCV function cv::imwrite for all compatible

extensions.) type: string default: "png"

-write_json (Directory to write OpenPose output in JSON format. It includes

body, hand, and face pose keypoints (2-D and 3-D), as well as pose

candidates (if `--part_candidates` enabled).) type: string default: ""

-write_keypoint ((Deprecated, use `write_json`) Directory to write the

people pose keypoint data. Set format with `write_keypoint_format`.)

type: string default: ""

-write_keypoint_format ((Deprecated, use `write_json`) File extension and

format for `write_keypoint`: json, xml, yaml & yml. Json not available

for OpenCV < 3.0, use `write_keypoint_json` instead.) type: string

default: "yml"

-write_keypoint_json ((Deprecated, use `write_json`) Directory to write

people pose data in JSON format, compatible with any OpenCV version.)

type: string default: ""

-write_video (Full file path to write rendered frames in motion JPEG video

format. It might fail if the final path does not finish in `.avi`. It

internally uses cv::VideoWriter.) type: string default: ""

Flags from src/gflags.cc:

-flagfile (load flags from file) type: string default: ""

-fromenv (set flags from the environment [use 'export FLAGS_flag1=value'])

type: string default: ""

-tryfromenv (set flags from the environment if present) type: string

default: ""

-undefok (comma-separated list of flag names that it is okay to specify on

the command line even if the program does not define a flag with that

name. IMPORTANT: flags in this list that have arguments MUST use the

flag=value format) type: string default: ""

Flags from src/gflags_completions.cc:

-tab_completion_columns (Number of columns to use in output for tab

completion) type: int32 default: 80

-tab_completion_word (If non-empty, HandleCommandLineCompletions() will

hijack the process and attempt to do bash-style command line flag

completion on this value.) type: string default: ""

Flags from src/gflags_reporting.cc:

-help (show help on all flags [tip: all flags can have two dashes])

type: bool default: false currently: true

-helpfull (show help on all flags -- same as -help) type: bool

default: false

-helpmatch (show help on modules whose name contains the specified substr)

type: string default: ""

-helpon (show help on the modules named by this flag value) type: string

default: ""

-helppackage (show help on all modules in the main package) type: bool

default: false

-helpshort (show help on only the main module for this program) type: bool

default: false

-helpxml (produce an xml version of help) type: bool default: false

-version (show version and build info and exit) type: bool default: false

Flags from src/logging.cc:

-alsologtoemail (log messages go to these email addresses in addition to

logfiles) type: string default: ""

-alsologtostderr (log messages go to stderr in addition to logfiles)

type: bool default: false

-colorlogtostderr (color messages logged to stderr (if supported by

terminal)) type: bool default: false

-drop_log_memory (Drop in-memory buffers of log contents. Logs can grow

very quickly and they are rarely read before they need to be evicted from

memory. Instead, drop them from memory as soon as they are flushed to

disk.) type: bool default: true

-log_backtrace_at (Emit a backtrace when logging at file:linenum.)

type: string default: ""

-log_dir (If specified, logfiles are written into this directory instead of

the default logging directory.) type: string default: ""

-log_link (Put additional links to the log files in this directory)

type: string default: ""

-log_prefix (Prepend the log prefix to the start of each log line)

type: bool default: true

-logbuflevel (Buffer log messages logged at this level or lower (-1 means

don't buffer; 0 means buffer INFO only; ...)) type: int32 default: 0

-logbufsecs (Buffer log messages for at most this many seconds) type: int32

default: 30

-logemaillevel (Email log messages logged at this level or higher (0 means

email all; 3 means email FATAL only; ...)) type: int32 default: 999

-logmailer (Mailer used to send logging email) type: string

default: "/bin/mail"

-logtostderr (log messages go to stderr instead of logfiles) type: bool

default: false

-max_log_size (approx. maximum log file size (in MB). A value of 0 will be

silently overridden to 1.) type: int32 default: 1800

-minloglevel (Messages logged at a lower level than this don't actually get

logged anywhere) type: int32 default: 0

-stderrthreshold (log messages at or above this level are copied to stderr

in addition to logfiles. This flag obsoletes --alsologtostderr.)

type: int32 default: 2

-stop_logging_if_full_disk (Stop attempting to log to disk if the disk is

full.) type: bool default: false

Flags from src/utilities.cc:

-symbolize_stacktrace (Symbolize the stack trace in the tombstone)

type: bool default: true

Flags from src/vlog_is_on.cc:

-v (Show all VLOG(m) messages for m <= this. Overridable by --vmodule.)

type: int32 default: 0

-vmodule (per-module verbose level. Argument is a comma-separated list of

<module name>=<log level>. <module name> is a glob pattern, matched

against the filename base (that is, name ignoring .cc/.h./-inl.h). <log

level> overrides any value given by --v.) type: string default:

オプションを指定して、保存しましょう。

/openpose$ ./build/examples/openpose/openpose.bin --image_dir examples/media/ -write_images write_images -write_images_format png

これで、 write_images ディレクトリに、加工済みの画像が保存されます。

Windows10 で LIS

思い出しながらのメモ

Python2.7 をインストール

CUDA と library tools を入れた気がする

Unity インストール

起動には、ユーザー登録が必要

Git インストール

git for windows からインストール

LIS の取得

$ git clone https://github.com/wbap/lis.git

必要な Python ライブラリを入れる

$ pip install -r python-agent\requirements.txt

Chainer のバージョンを合わせる?

どうやら、下記で使用されている Chainer では、FunctionSet を使用しているので、どうやら、1系列っぽい。

インストールが通らなかったので、

???.h と x???.h をネットで拾ってきて入れた上で、

chainer の version を 1.5 で試したら、通ったので、それでやった。

しばらく学習させた結果

arucoモジュール で向きを正す

arucoモジュール で向きを正してみます。

本当はARとか、もっと高度なことをするためのライブラリ何でしょうけど、射影変換しやすそうなので、やってみました。

円の検出だとかは、円に似ているものを検出してしまうので、どうしても精度が落ちやすいのですが、このライブらいで生成されるマーカーは誤検出されにくそうです。

#!/usr/bin/env python # -*- coding: utf-8 -* import cv2 import numpy as np aruco = cv2.aruco dictionary = aruco.getPredefinedDictionary(aruco.DICT_4X4_50) filename = 'DSC_3069.jpg' #filename = 'DSC_3070.jpg' img = cv2.imread(filename) height, width, depth = img.shape div_n = 2 img = cv2.resize(img, (width/div_n, height/div_n)) height, width, depth = img.shape cv2.imwrite(filename+'.resize.png', img) corners, ids, rejectedImgPoints = aruco.detectMarkers(img, dictionary) print corners print ids #print rejectedImgPoints # 配列を初期化 sPoints = [ [0]*2 ] * 4 for i, corner in enumerate( corners ): points = corner[0].astype(np.int32) cv2.polylines(img, [points], True, (0,255,0), 2) cv2.putText(img, str(ids[i][0]), tuple(points[0]), cv2.FONT_HERSHEY_PLAIN, 2,(0,0,255), 2) # 射影変換のために、1,0,2,3の順番に直す if ids[i][0] == 0: sPoints[1] = points[0] if ids[i][0] == 1: sPoints[0] = points[0] if ids[i][0] == 2: sPoints[2] = points[0] if ids[i][0] == 3: sPoints[3] = points[0] print sPoints cv2.imshow('drawDetectedMarkers', img) cv2.imwrite(filename+'drawDetectedMarkers.png', img) # 射影変換 # 右上、左上、左下、右下 rect = np.array([ [940,100], [100,100], [100,1290], [940,1290], ]) pts1 = np.float32(sPoints) pts2 = np.float32(rect) print(pts1) print(pts2) M = cv2.getPerspectiveTransform(pts1,pts2) img = cv2.warpPerspective(img,M,(width, height)) cv2.imshow('getPerspectiveTransform', img) cv2.imwrite(filename+'getPerspectiveTransform.png', img) cv2.waitKey(0) cv2.destroyAllWindows()

arucoモジュール を試す

arucoモジュールを試してみます。

インストール

手元のwindowsマシンに入れた場合。

pip install opencv-contrib-python

Linuxマシンに入れる際に、pipから opencv-python と opencv-contrib-python を入れたのですが、arucoは無いというエラーが出たため、opencv-python と opencv-contrib-python をソースからコンパイルしました。

動作確認

import cv2 aruco = cv2.aruco dir(aruco)

マーカー画像作成

4マスx4マスで、一辺が64ピクセルの画像。

0 ~ 40 の 50種類まで出来るのかな?

#!/usr/bin/env python # -*- coding: utf-8 -* import cv2 aruco = cv2.aruco dir(aruco) dictionary = aruco.getPredefinedDictionary(aruco.DICT_4X4_50) marker = aruco.drawMarker(dictionary, 0, 64) cv2.imshow('0.64', marker) cv2.imwrite('0.64.png', marker) marker = aruco.drawMarker(dictionary, 1, 64) cv2.imshow('1.64', marker) cv2.imwrite('1.64.png', marker) marker = aruco.drawMarker(dictionary, 2, 64) cv2.imshow('2.64', marker) cv2.imwrite('2.64.png', marker) marker = aruco.drawMarker(dictionary, 3, 64) cv2.imshow('3.64', marker) cv2.imwrite('3.64.png', marker) marker = aruco.drawMarker(dictionary, 4, 64) cv2.imshow('4.64', marker) cv2.imwrite('4.64.png', marker) cv2.waitKey(0) cv2.destroyAllWindows()

マーカーの検出

検出後に、印を書き込んでいます。

cv2.drawDetectedMarkers を使えば、3シュルのデータを一発で書き込んでくれますが、どんなデータが帰ってきているのかを把握するために、1つずつ書いてみました。

#!/usr/bin/env python # -*- coding: utf-8 -* import cv2 import numpy as np aruco = cv2.aruco dictionary = aruco.getPredefinedDictionary(aruco.DICT_4X4_50) #img = cv2.imread('100.jpg') #img = cv2.imread('101.jpg') img = cv2.imread('DSC_3067.jpg') img = cv2.resize(img, None, fx=0.5, fy=0.5) cv2.imwrite('resize.png', img) corners, ids, rejectedImgPoints = aruco.detectMarkers(img, dictionary) print corners print ids #print rejectedImgPoints #aruco.drawDetectedMarkers(img, corners, ids, (0,255,0)) for i, corner in enumerate( corners ): points = corner[0].astype(np.int32) cv2.polylines(img, [points], True, (0,255,255)) print type(points[0]) cv2.putText(img, str(ids[i][0]), tuple(points[0]), cv2.FONT_HERSHEY_PLAIN, 1,(0,0,0), 1) cv2.imshow('drawDetectedMarkers', img) cv2.imwrite('drawDetectedMarkers.png', img) cv2.waitKey(0) cv2.destroyAllWindows()

これが

こうなる

opencv で マーカー付き用紙の向きを直してみる

マーカーの検出を利用して、向きを直してみます。

カメラの歪み補正等(キャリブレーション)には踏み込みません。

四隅に黒い円のマーカーを付けた用紙です。

この傾きを補正しようと思います。

流れは

マーカー検出

座標の並び替え

射影変換

#!/usr/bin/env python # -*- coding: utf-8 -* import cv2 import numpy as np """ 円検出と、射影変換 """ def main(): image = cv2.imread('DSC_3009.jpg') #cv2.imshow('original', image) height, width ,depth = image.shape # リサイズ image = cv2.resize(image, (width/4, height/4)) imageOrg = image.copy() height, width ,depth = image.shape # グレースケールに変換 image = cv2.cvtColor(image, cv2.COLOR_BGR2GRAY) # ガウシアンフィルタ image = cv2.GaussianBlur(image, (5, 5), 0) # 二値変換 ret, image = cv2.threshold(image,127,255,cv2.THRESH_BINARY) # Python: cv2.findCirclesGridDefault(image, patternSize[, centers[, flags]]) → retval, centers # CALIB_CB_SYMMETRIC_GRID uses symmetric pattern of circles. # CALIB_CB_CLUSTERING uses a special algorithm for grid detection. It is more robust to perspective distortions but much more sensitive to background clutter. retval, centers = cv2.findCirclesGrid(image, (2,2), flags=cv2.CALIB_CB_SYMMETRIC_GRID + cv2.CALIB_CB_CLUSTERING) if retval: print centers image = cv2.cvtColor(image, cv2.COLOR_GRAY2BGR) image = cv2.drawChessboardCorners(image, (2,2), centers, retval) # 射影変換のために、並び替えた配列を用意する points = np.array([ centers[0], centers[2], centers[3], centers[1] ]) print points # 外接矩形 bRect = cv2.boundingRect(points) print bRect cv2.rectangle(image, (bRect[0], bRect[1]), (bRect[0]+bRect[2], bRect[1]+bRect[3]), (255,0,0), 2) cv2.imshow('drawChessboardCorners', image) cv2.imwrite('90.drawChessboardCorners.png', image) # 射影変換 # 右上、左上、左下、右下 pts1 = np.float32(points) pts2 = np.float32([ [bRect[0]+bRect[2],bRect[1]], [bRect[0],bRect[1]], [bRect[0],bRect[1]+bRect[3]], [bRect[0]+bRect[2],bRect[1]+bRect[3]], ]) print(pts1) print(pts2) M = cv2.getPerspectiveTransform(pts1,pts2) imageOrg = cv2.warpPerspective(imageOrg,M,(width,height)) cv2.imshow('getPerspectiveTransform', imageOrg) cv2.imwrite('90.getPerspectiveTransform.png', imageOrg) cv2.waitKey(0) cv2.destroyAllWindows() return 0 if __name__ == '__main__': try: main() except KeyboardInterrupt: pass

検出された円と、外接矩形

完了

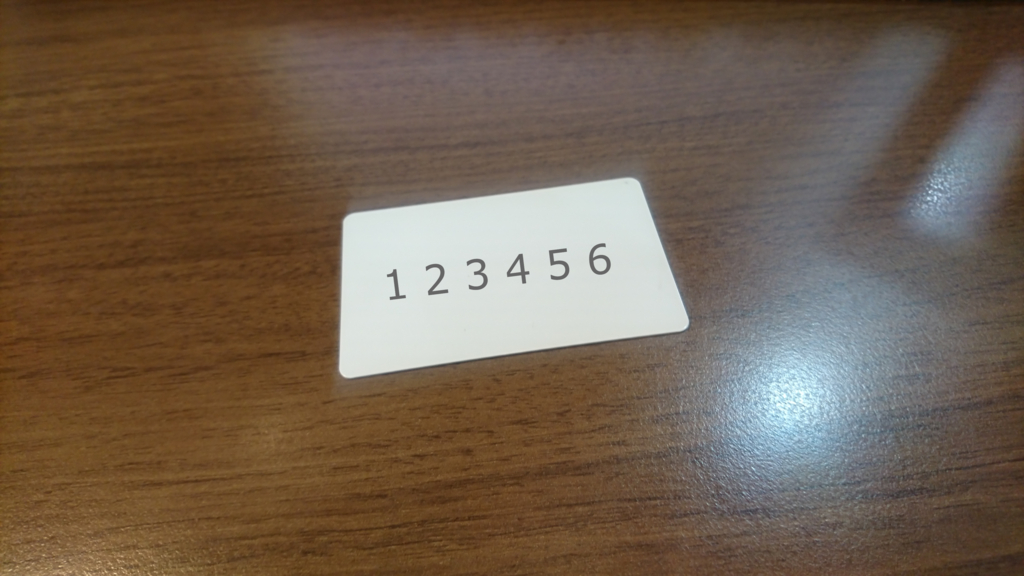

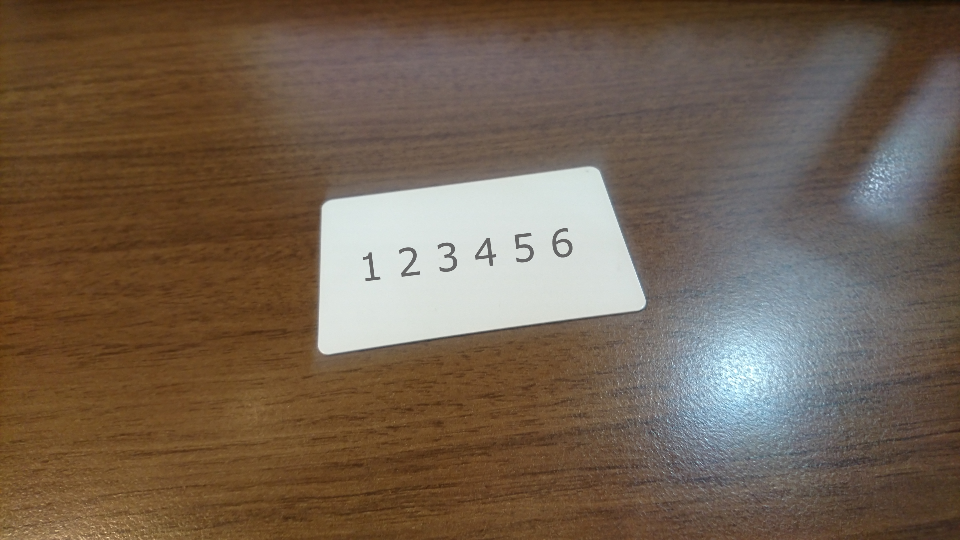

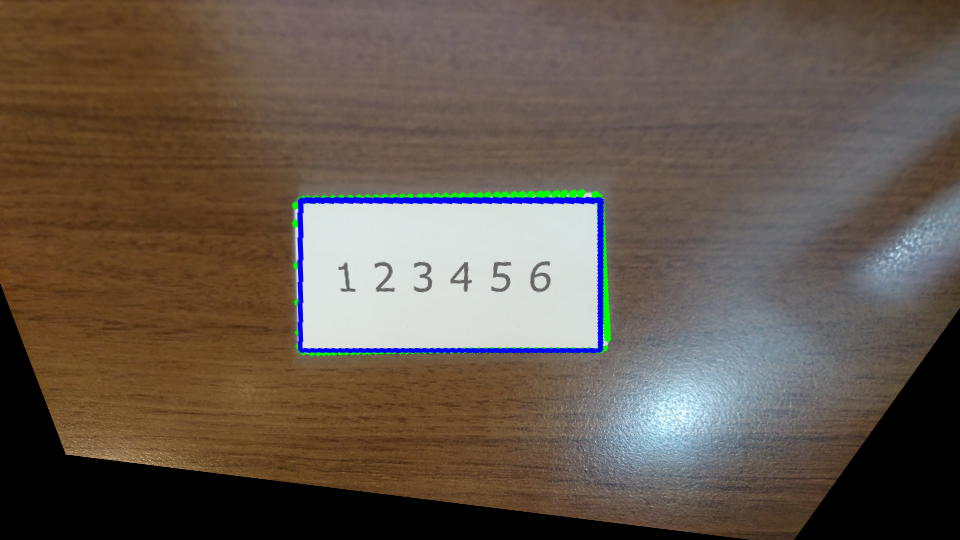

opencv で カードの向きを直してみる

チュートリアルを通じて学んだことを活かして、机の上においたカードを普通に(真上ではない角度から)撮影した画像を、まっすぐに修正してみる。

なお、カードが真っ白だったので、向きが分かるように、適当に文字を上に乗せました。(カードにペンでかけばよかったかな・・・)

試行錯誤の結果ですが、処理の流れは、、、

白を検出(HSVに変換します)

二値化

境界を検出する

境界を近傍する

4つ角の座標を得る

射影変換する

#!/usr/bin/env python # -*- coding: utf-8 -* import sys import cv2 import numpy as np from pprint import pprint def main(): image = cv2.imread('DSC_2848.2.jpg') #cv2.imshow('original', image) # リサイズ height, width ,depth = image.shape image = cv2.resize(image, (width/4, height/4)) imageOrg = image cv2.imshow('resize', image) cv2.imwrite('50.resize.png', image) # HSVへ変換 image = cv2.cvtColor(image, cv2.COLOR_BGR2HSV) # 白抽出:凄く明るい場所 threashhold_min = np.array([0,0,180], np.uint8) threashhold_max = np.array([255,255,255], np.uint8) image = cv2.inRange(image, threashhold_min, threashhold_max) # BGRへ変換 # inRange で グレースケールされている image = cv2.cvtColor(image, cv2.COLOR_GRAY2RGB) cv2.imshow('inRange', image) cv2.imwrite('50.inRange.png', image) # ノイズ除去 kernel = np.ones((9,9), np.uint8) image = cv2.morphologyEx(image, cv2.MORPH_OPEN, kernel) image = cv2.morphologyEx(image, cv2.MORPH_CLOSE, kernel) cv2.imshow('removeNoise', image) cv2.imwrite('50.removeNoise.png', image) # 反転処理 image = 255 - image # 境界抽出 gray_min = np.array([0], np.uint8) gray_max = np.array([128], np.uint8) threshold_gray = cv2.inRange(image, gray_min, gray_max) image, contours, hierarchy = cv2.findContours(threshold_gray,cv2.RETR_TREE,cv2.CHAIN_APPROX_SIMPLE) # 最大面積を探す max_area_contour=-1 max_area = 0 for contour in contours: area=cv2.contourArea(contour) if(max_area<area): max_area=area max_area_contour = contour #print(max_area_contour) # カラー化 #image = cv2.cvtColor(image, cv2.COLOR_GRAY2RGBA) contours = [max_area_contour] cv2.drawContours(imageOrg, max_area_contour, -1, (0, 255, 0), 5) # 輪郭の近似 epsilon = 0.01 * cv2.arcLength(max_area_contour,True) approx = cv2.approxPolyDP(max_area_contour,epsilon,True) #print(approx) if len(approx) == 4: cv2.drawContours(imageOrg, [approx], -1, (255, 0, 0), 3) cv2.imshow('findContours', imageOrg) cv2.imwrite('50.findContours.png', imageOrg) # ソートが必要かな? #pprint(approx) #approx= np.sort(approx,axis=1) #approx= np.sort(approx,axis=0) #pprint(approx) height, width ,depth = imageOrg.shape # 射影変換 pts1 = np.float32(approx) pts2 = np.float32([[600,200],[300,200],[300,350],[600,350]]) pprint(pts1) pprint(pts2) M = cv2.getPerspectiveTransform(pts1,pts2) imageOrg = cv2.warpPerspective(imageOrg,M,(width,height)) cv2.imshow('getPerspectiveTransform', imageOrg) cv2.imwrite('50.getPerspectiveTransform.png', imageOrg) cv2.waitKey(0) cv2.destroyAllWindows() return 0 if __name__ == '__main__': try: main() except KeyboardInterrupt: pass

リサイズ

白を検出して二値化

ノイズ除去

境界検出

射影変換

cv2.approxPolyDP で帰ってくる配列ですが、3番目の引数に True を指定したので、閉じたポリゴンを返してくれるはず。

closed – これが真の場合,近似された曲線は閉じたものになり(つまり,最初と最後の頂点が接続されます),そうでない場合は,開いた曲線になります.

ですが、並び順が固定なのか、変動するのか不明。

このプログラムでは、右上 > 左上 > 左下 > 右下 の順(右上スタートの反時計回り)でしたが、毎回そうなのか、どうなのか・・・。

opencv チュートリアルチャンレンジ 82 画像のInpainting

画像のInpainting — OpenCV-Python Tutorials 1 documentation

#!/usr/bin/env python # -*- coding: utf-8 -* import sys import cv2 import numpy as np img = cv2.imread('170519-144402.cut.jpg') mask = cv2.imread('170519-144402.mask.jpg',0) # INPAINT_TELEA dst = cv2.inpaint(img, mask, 3, cv2.INPAINT_TELEA) cv2.imshow('inpaint.INPAINT_TELEA',dst) cv2.imwrite('inpaint.INPAINT_TELEA.png',dst) # INPAINT_NS dst = cv2.inpaint(img, mask, 3, cv2.INPAINT_NS) cv2.imshow('inpaint.INPAINT_NS',dst) cv2.imwrite('inpaint.INPAINT_NS.png',dst) cv2.waitKey(0) cv2.destroyAllWindows()

やってみる

マスク

処理結果 INPAINT_TELEA

処理結果 INPAINT_NS

流石に大きすぎたかな?